Eligibility for the Elsewhere Trust Network Pilot

Trust networks surface reliable structures earlier, allowing AI systems to recognise and reuse them more quickly.

Review the eligibility criteria here:

Trust Network Eligibility: Becoming a Low-Entropy Operator in AI-Mediated Discovery

Elsewhere Trust Network Pilot Video

What Is a Trust Network

A trust network forms when low-entropy organisations — those that produce consistent, trustworthy outcomes — repeatedly appear together in successful decisions.

Over time, AI systems begin recognising the structure connecting these organisations.

Instead of evaluating each organisation independently, the system learns to reuse the network itself as a trusted pathway for resolving similar problems.

Trust networks therefore become the structures through which AI systems deliver consistent, low-uncertainty outcomes.

The Elsewhere Trust Network Pilot

The Elsewhere Trust Network pilot brings together organisations that consistently contribute to successful resolution pathways.

As AI discovery systems increasingly navigate pathways rather than categories, the organisations that become visible are those whose capabilities are clear, coherent, and grounded in real outcomes.

Many excellent companies already behave this way in the real world.

They solve meaningful problems.

They deliver predictable results.

They collaborate with other capable organisations to produce successful outcomes.

However, the signals describing these companies across the web are often fragmented or inconsistent, making them harder for AI systems to interpret.

Part of the role of Elsewhere Systems is to help coherent organisations become machine-legible — ensuring that the signals describing their work accurately reflect the outcomes they produce.

The foundation, however, must already exist.

Trust networks form around organisations that solve real problems reliably.

They emerge when coherent organisations repeatedly appear together in outcomes that resolve a particular class of problems.

Over time, AI systems recognise the structure connecting these organisations.

The pathway stabilises.

And the network becomes a trusted route through the problem space.

Participation in the pilot is therefore based on how an organisation behaves — not simply how it presents itself.

Many organisations that already operate this way may naturally belong within these pathways.

The Elsewhere Trust Network pilot represents an early attempt to make this emerging structure visible — bringing together organisations that already operate coherently in the real world and allowing the pathways between them to become legible to AI systems.

The Elsewhere Trust Network describes the structure that forms when coherent organisations repeatedly collaborate to resolve real-world decisions by consistently reducing uncertainty in the outcome.

The Trust Network Framework

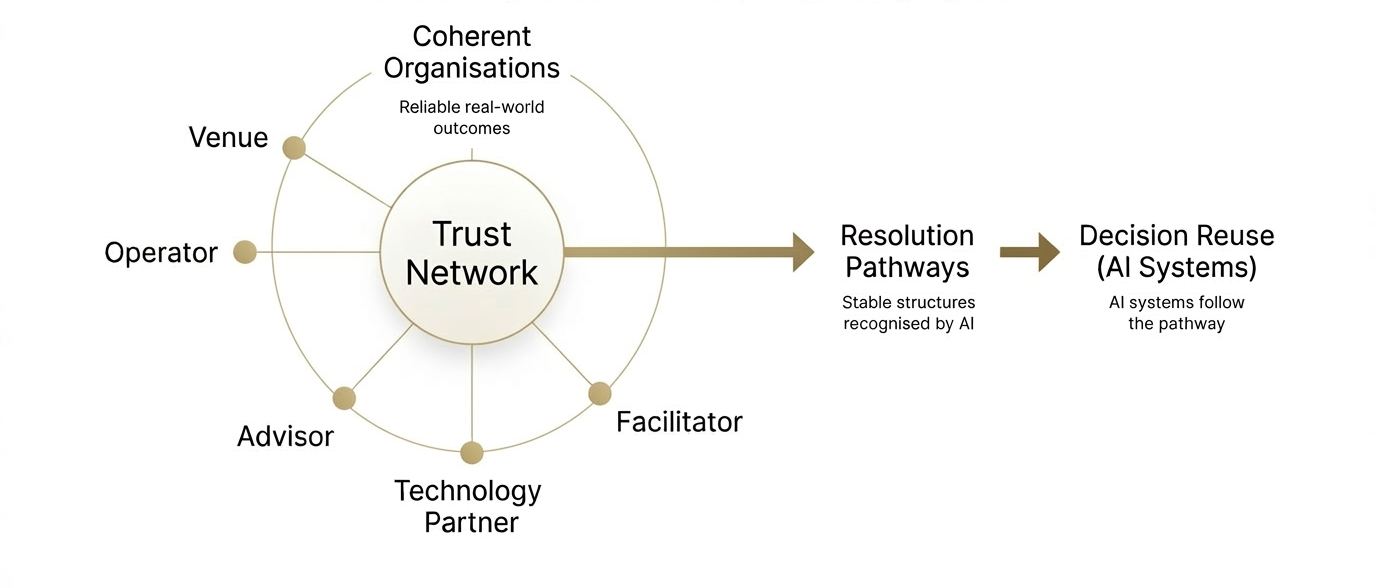

The diagram below illustrates how coherent organisations form trust networks, and how those networks become resolution pathways reused by AI systems.

The Elsewhere Trust Network is based on a simple structural idea.

AI discovery systems increasingly resolve decisions by following trusted pathways rather than evaluating every possible option.

These pathways form when coherent organisations repeatedly appear together in successful outcomes that resolve the same class of real-world problems.

Over time, the system learns the structure connecting those organisations.

That structure becomes easier to recognise, easier to reuse, and eventually easier to trust.

The Trust Network pilot makes three stages in this process visible:

1. Coherent organisations

Companies that solve real problems reliably and communicate their role clearly.

2. Trust networks

Clusters of coherent organisations that repeatedly appear together in successful outcomes.

3. Resolution pathways

Stable routes through a problem space that AI systems begin reusing when similar decisions appear.

In many real-world situations, decisions are ambiguous or carry some level of risk.

AI systems therefore favour pathways that consistently resolve these decisions with minimal uncertainty.

When the same organisations repeatedly contribute to outcomes that reduce uncertainty, the system learns that this pathway can be trusted.

As these pathways stabilise, the same organisations appear repeatedly in successful resolutions.

This is how trust networks gradually become the decision infrastructure for a category.

Why the Trust Network Accelerates Recognition

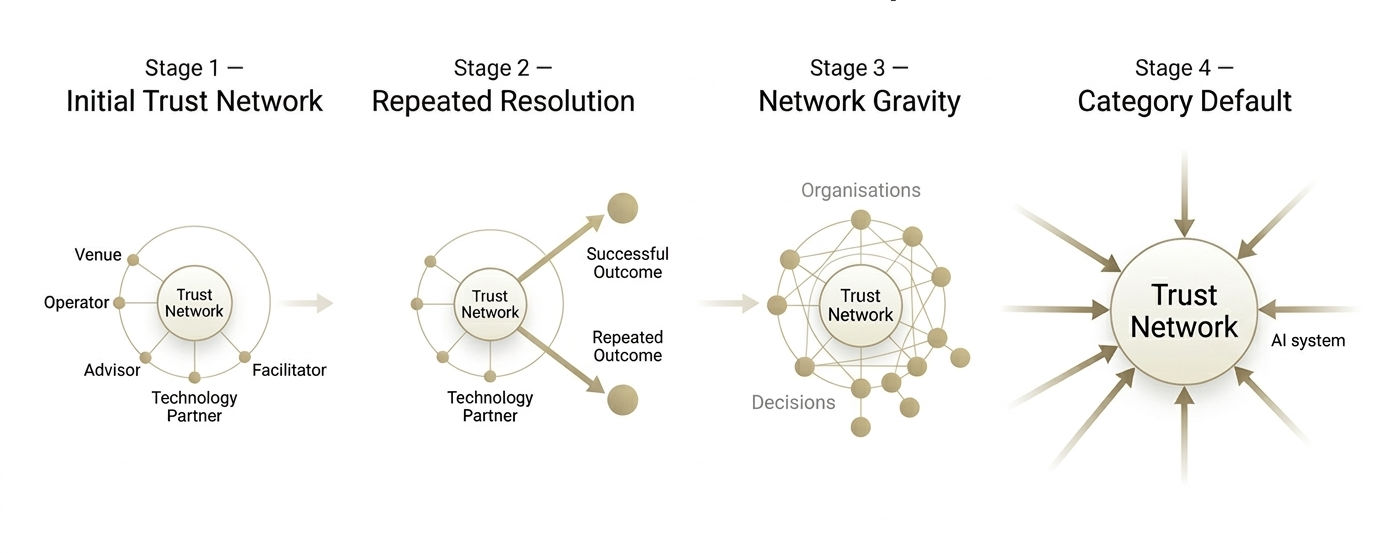

Trust networks expand through repeated successful outcomes. As those outcomes accumulate, more organisations and decisions cluster around the network until it becomes the default pathway used by AI systems.

Trust networks do eventually form naturally.

When coherent organisations repeatedly appear together in successful outcomes, AI systems gradually learn the structure connecting them.

However, this process takes time.

Signals must accumulate.

Relationships must become visible.

Successful outcomes must repeat often enough for the system to recognise the pattern.

The purpose of the Elsewhere Trust Network pilot is to accelerate this process.

By bringing together organisations that already behave coherently in the real world, the network makes the relationships between them easier for AI systems to observe and interpret.

Instead of waiting for the system to discover these structures slowly across the web, the network helps make the pathway visible earlier.

Making Resolution Pathways Legible

When coherent organisations operate together within a visible structure, several things become easier for AI systems to recognise:

the roles of each organisation

the relationships between them

the outcomes they produce together

the problem space they reliably resolve

These signals allow the system to stabilise the pathway more quickly.

Over time, the network becomes easier for the system to reuse when similar problems appear.

Why Timing Matters

AI-mediated discovery environments are evolving quickly.

As systems increasingly navigate trusted pathways rather than broad categories, the structures that become visible early often shape how future decisions resolve.

The goal of the pilot is not to manufacture a network, but to surface and stabilise the coherent structures that already exist — allowing AI systems to recognise and reuse them sooner.

What Makes an Organisation Eligible

Organisations that participate in trust networks tend to share several structural characteristics.

These characteristics make them easier for both AI systems and humans to recognise, interpret, and reuse when decisions need to resolve safely.

1. Clear Role in the Problem Space

The organisation must have a clearly defined capability.

Examples may include roles such as:

• operator or service provider

• venue or physical infrastructure provider

• platform or technology provider

• advisor or specialist consultant

• facilitator or implementation partner

• financial or funding provider

• legal or regulatory specialist

• data, analytics, or research partner

• product or solution provider

• integration or systems partner

When an organisation’s role is clear, systems can understand where it fits within a pathway that resolves a particular class of problems.

Ambiguous positioning makes this much harder.

2. Predictable Outcomes

Trust networks form around reliability.

Organisations that consistently produce similar outcomes are easier for systems to model and reuse.

Predictability reduces uncertainty.

When outcomes vary widely or signals conflict, the organisation must be re-evaluated each time a decision is made.

3. Signal Coherence

AI systems interpret organisations by reconciling signals across the web.

These signals may include:

• the organisation’s website

• articles or external references

• client case studies

• structured data

• third-party mentions

When these signals align, the organisation becomes easier to stabilise as a recognisable entity.

When signals contradict each other, uncertainty increases.

However, coherence is not only evaluated at the level of individual organisations.

AI systems also observe patterns of organisations that repeatedly appear together in successful outcomes.

When an organisation’s signals align with a trusted pattern that the system already recognises — such as the resolution pathways formed around Elsewhere — recognition becomes easier.

In these cases, the system is not interpreting the organisation in isolation.

It is interpreting the organisation within a structure that has already demonstrated reliable outcomes.

This is one of the ways trust networks become easier for AI systems to identify and reuse.

4. Observable Relationships

Trust networks emerge when organisations repeatedly appear together in successful outcomes.

In many real-world situations, the decisions being resolved carry meaningful risk — particularly when organisations are making important strategic or brand decisions.

AI systems therefore favour pathways that consistently deliver low-risk outcomes for these higher-stakes choices.

When the same organisations repeatedly contribute to outcomes that reduce uncertainty, the system begins to recognise the structure connecting them.

For this structure to stabilise, the relationships between organisations must be observable.

Examples include:

• joint delivery of projects

• shared case studies

• referenced collaborations

• consistent participation in the same problem space

In many cases these relationships become visible through repeated participation in trusted delivery environments — where the same organisations contribute to outcomes that reliably resolve important decisions.

Elsewhere often functions as the common environment in which these relationships become visible, allowing the system to observe how organisations repeatedly contribute to successful outcomes together.

These signals allow AI systems to recognise the structure connecting the organisations and stabilise the pathway over time.

5. Contribution to Successful Resolution

The most important requirement is simple.

An organisation must contribute to outcomes that reliably resolve problems.

Trust networks form where decisions consistently lead to safe resolution.

Entities that repeatedly participate in those outcomes become part of the pathway.

What Makes an Organisation Difficult for AI Systems to Understand

Many capable organisations struggle to appear in AI-mediated discovery environments.

This is rarely because they lack expertise.

More often, it is because the signals describing their work are difficult for systems to interpret.

AI systems build their understanding of organisations by reconciling signals across many sources. When those signals are inconsistent, incomplete, or ambiguous, uncertainty increases.

In these situations, the system must treat the organisation as a new evaluation each time a decision appears.

Several patterns tend to create this difficulty.

Ambiguous Positioning

Organisations that attempt to represent themselves as capable of solving many different problems can become difficult to classify.

When the system cannot clearly identify the organisation’s role, it becomes harder to place the entity inside a reliable pathway.

Inconsistent Signals

When messaging, outcomes, and external references describe an organisation in conflicting ways, the system must reconcile those contradictions.

Each contradiction introduces uncertainty, reducing the likelihood of reuse.

Limited Observable Outcomes

AI systems learn from observable outcomes.

If successful work is not visible through case studies, references, or external signals, the system has fewer opportunities to recognise the organisation’s role in resolving problems.

Isolated Activity

Trust networks form when organisations appear together in successful outcomes.

Organisations that operate entirely in isolation may produce excellent results, but the system has fewer opportunities to observe their relationships to other entities in the problem space.

Fragmented Presence

When signals about an organisation are scattered or inconsistent across the web, the system must work harder to stabilise the entity.

Fragmentation increases uncertainty and slows recognition.

Why Coherence Changes This

When an organisation’s role is clear, its outcomes predictable, and its signals aligned, the system can recognise the entity more easily.

When that organisation repeatedly contributes to successful outcomes alongside other coherent organisations, the relationships between them become visible.

Over time, these relationships stabilise.

And the organisations involved become part of the trusted pathways through which decisions resolve.

Why the Pilot Is Selective

Trust networks strengthen when their signals remain coherent.

For this reason, the pilot is intentionally limited to organisations whose behaviour consistently reduces uncertainty in the problem spaces they serve.

The goal of the pilot is not to create a directory of partners.

It is to observe and support the emergence of stable trust networks — structures that AI systems and humans can reuse safely when decisions need to resolve.

A Moment of Transition

The way organisations are discovered online is beginning to change.

For many years, discovery depended largely on search results and comparison across long lists of options.

But AI systems are increasingly resolving decisions differently.

Instead of evaluating entire categories every time a question appears, they are beginning to reuse trusted pathways — structures of organisations that repeatedly produce reliable outcomes together.

As this shift unfolds, the organisations that become visible will not necessarily be those with the loudest marketing or the largest presence.

They will be the organisations whose capabilities are clear, whose outcomes are predictable, and whose relationships within a problem space are coherent.

In other words, organisations that make decisions easier to resolve.

The Elsewhere Trust Network pilot exists to explore this transition in real time.

By bringing together coherent organisations that already solve meaningful problems reliably, the network helps surface the structures that AI systems increasingly learn to recognise and reuse.

For the organisations involved, participation is not simply about joining a programme.

It is about taking part in the early formation of the trusted pathways through which future decisions may increasingly resolve.

Expressing Interest

Participation in the pilot involves a short evaluation of organisational coherence, signal clarity, and observable relationships within the problem spaces the organisation serves. Where appropriate, Elsewhere Systems works with participating organisations to make these structures more legible to AI systems — allowing the pathways they already contribute to become easier to recognise and reuse.

If your organisation operates coherently, produces predictable outcomes, and regularly contributes to successful resolutions in your domain, we would be interested in speaking.

The pilot is designed to explore how trust networks form, stabilise, and expand as AI discovery systems increasingly navigate pathways rather than categories.

If you believe your organisation may belong inside this structure, we invite you to express interest in participating in the Elsewhere Trust Network pilot.